Documentation Index

Fetch the complete documentation index at: https://docs.lancedb.com/llms.txt

Use this file to discover all available pages before exploring further.

The Dataset

This task is different from benchmarks like BEIR, which focus on text-based doc retrieval, finding the most relevant documents from a large collection. Here, we want intra-document localization, where the goal is to find a precise piece of information within a single, dense document, in a multimodal setting.The Task: The Document Haystack Dataset

Our benchmark is built on the AmazonScience/document-haystack dataset, which contains 25 visually complex source documents (e.g., financial reports, academic papers). To create a rigorous test, our evaluation follows a per-document methodology:- We process each of the 25 source documents independently.

- Table Creation: For a single source document (e.g., “AIG”), we ingest all pages from all of its page-length variants (from 5 to 200 pages long). This creates a temporary LanceDB table containing approximately 1,230 pages.

- The Task: We then query this table using a set of “needle” questions, where the goal is to retrieve the exact page number containing the answer. A successful retrieval means the correct page number is within the top K results.

- Target metric: We measure both retrieval accuracy (Hit@K) and the average search latency for each query against this table.

Models and Architectures

Our testbed includes a baseline single-vector model and a family of advanced multivector models.Single-Vector (Bi-Encoder) Baseline: openai/clip-vit-base-patch32

A bi-encoder maps an entire piece of content (a query, a document page) to a single vector. The search process is simple: pre-compute one vector for every page, and at query time, find the page vector closest to the query vector.

- Strength: Speed and simplicity.

- Weakness: This creates an information bottleneck. All the nuanced details, keywords, and semantic relationships on a page must be compressed into a single, fixed-size vector. For finding a needle, this is like trying to describe a specific person’s face using only one word.

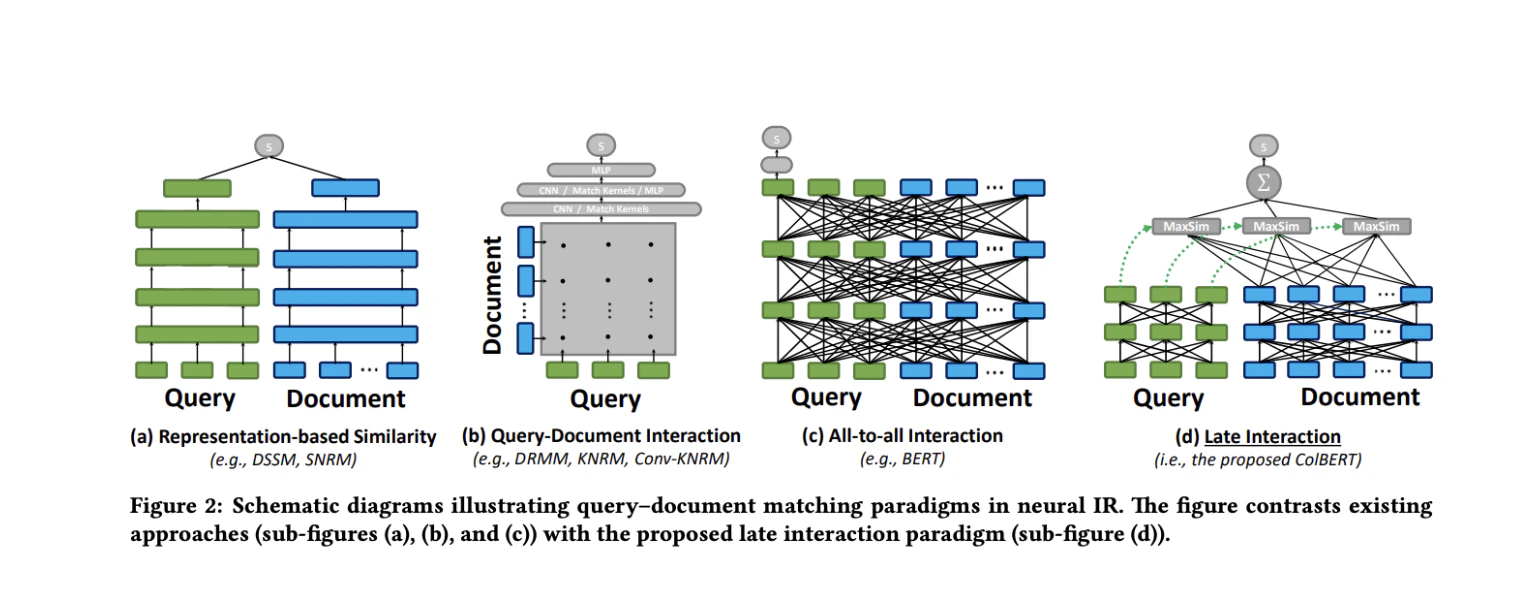

Multi-vector (Late-Interaction) Models

Multi-vector models, pioneered by ColBERT, take a different approach. Instead of one vector per page, they generate a set of vectors for each page—one for every token (or image patch).- Mechanism (MaxSim): The search process is more sophisticated. For each token in the query, the system finds the most similar token on the page. These maximum similarity scores are then summed up to get the final relevance score. This “late-interaction” preserves fine-grained, token-level details.

- The Models: We used several vision-language models adapted for this architecture, including

ColPali,ColQwen2, andColSmol. While their underlying transformer backbones differ, they all share the ColBERT philosophy of representing documents as a bag of contextualized token embeddings.

Different Retrieval Strategies Used

A full multivector search is powerful but computationally intensive. Here are five strategies for managing it, complete with LanceDB implementation details.1. base: The Gold Standard (Full Multi-vector Search)

This is the pure, baseline late-interaction search. It offers the highest potential for accuracy by considering every token.

LanceDB also integrates with ConteXtualized Token Retriever (XTR) , an advanced retrieval model that prioritizes the most semantically important document tokens during search. This integration enhances the quality of search results by focusing on the most relevant token matches.

LanceDB Implementation:

Python

2. flatten: Mean Pooling

This strategy “flattens” the set of token vectors into a single vector by averaging them. This transforms the search into a standard, fast approximate nearest neighbor (ANN) search.

LanceDB Implementation:

Python

3. max_pooling

This is a variation of flatten. max_pooling takes the element-wise max across all token vectors instead of the mean. The implementation is identical to flatten, just with a different aggregation method (.max(axis=0)).

4. flatten and multivector rerank: The Hybrid “Optimization”

This two-stage strategy aims for the best of both worlds. First, use a fast, pooled-vector search to find a set of promising candidates. Then, run the full, accurate multivector search on only those candidates.

LanceDB Implementation:

This requires a table with two vector columns.

Python

5. hierarchical token pooling: Compressing the Haystack

This is an indexing-time strategy that aims to reduce the storage footprint and computational cost of multivector search by reducing the number of vectors per document. Instead of using every token vector, it clusters semantically similar tokens together and replaces them with a single, averaged vector.

- Mechanism: For each document, it computes the similarity between all token vectors, performs hierarchical clustering to group them, and then mean-pools the vectors within each cluster. This results in a smaller, more compact set of token vectors representing the document.

- Goal: To reduce memory and disk usage while attempting to preserve the most important semantic information, potentially offering a middle ground between the high accuracy of

basesearch and the speed of pooled methods.

base multivector search, but the data is pre-processed before ingestion.

Python

The Results

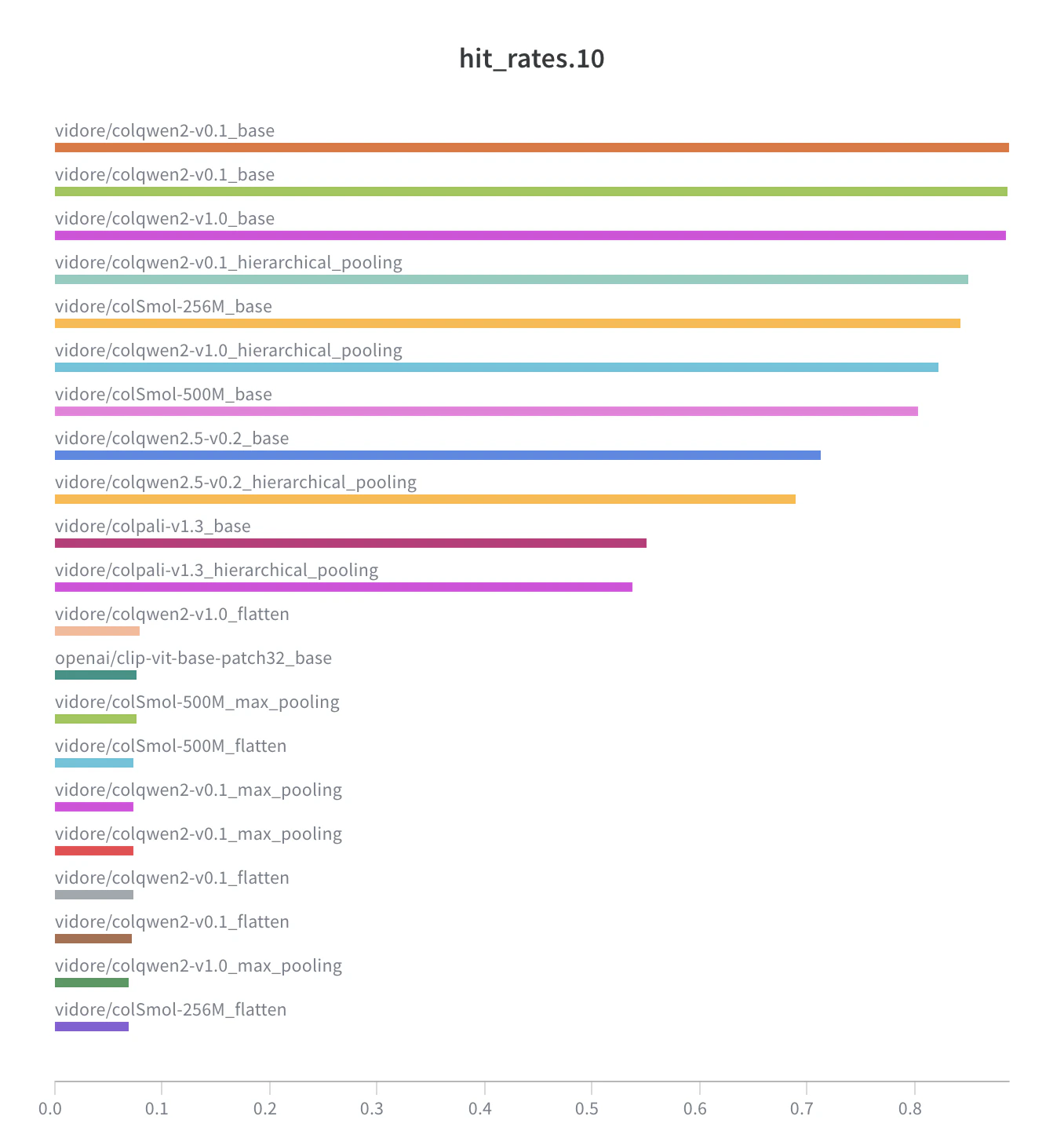

For a “needle in a haystack” task, retrieval accuracy is the primary metric of success. The benchmark results reveal a significant performance gap between the full multivector search strategy and common optimization techniques.Baseline Performance: Single-Vector Bi-Encoder

First, we establish a baseline using a standard single-vector bi-encoder model,openai/clip-vit-base-patch32. This represents a common approach to semantic search but, as the data shows, is ill-suited for this task’s precision requirements.

| Model | Strategy | Hit@1 | Hit@5 | Hit@20 | Avg. Latency (s) |

|---|---|---|---|---|---|

openai/clip-vit-base-patch32 | base | 1.6% | 4.7% | 11.8% | 0.008 s |

Multi-vector Model Performance

We now examine the performance of multivector models using different strategies. The following table compares thebase (full multivector), flatten (mean pooling), and rerank (hybrid) strategies across several late-interaction models.

| Model | Strategy | Hit@1 | Hit@5 | Hit@20 | Avg. Latency (s) |

|---|---|---|---|---|---|

vidore/colqwen2-v1.0 | flatten | 1.9% | 5.5% | 11.9% | 0.010 s |

vidore/colqwen2-v1.0 | flatten and multivector rerank | 0.3% | 1.5% | 7.3% | 0.692 s |

vidore/colqwen2-v1.0 | hierarchical token pooling | 13.7% | 60.5% | 91.6% | 0.144 s |

vidore/colqwen2-v1.0 | base | 14.0% | 65.4% | 95.5% | 0.668 s |

vidore/colpali-v1.3 | flatten | 1.7% | 4.5% | 9.3% | 0.008 s |

vidore/colpali-v1.3 | flatten and multivector rerank | 0.6% | 2.3% | 6.9% | 0.949 s |

vidore/colpali-v1.3 | hierarchical token pooling | 10.8% | 41.7% | 64.8% | 0.189 s |

vidore/colpali-v1.3 | base | 11.3% | 42.3% | 65.6% | 0.936 s |

vidore/colSmol-256M | flatten | 1.6% | 4.7% | 10.5% | 0.008 s |

vidore/colSmol-256M | flatten and multivector rerank | 0.3% | 1.6% | 7.0% | 0.853 s |

vidore/colSmol-256M | base | 14.4% | 64.0% | 91.7% | 0.848 s |

base strategy outperforms all other techniques. The flattned pooling and reranking strategies perform no better than the single-vector baseline. However, hierarchical token pooling seems like a decent alternative to base considering speed vs accuracy tradeoff. Let’s look at the numbers in detail.

In-Depth Analysis of Pooling Strategies

To further understand the failure of optimization techniques, we compared different methods for pooling token vectors into a single vector:mean (flatten), max.

| Model & Pooling Strategy | Hit@1 | Hit@5 | Hit@20 | Avg. Latency (s) |

|---|---|---|---|---|

vidore/colqwen2-v1.0 (mean_pooling) | 1.9% | 5.5% | 11.9% | 0.010 s |

vidore/colqwen2-v1.0 (max_pooling) | 1.4% | 4.2% | 11.2% | 0.011 s |

vidore/colqwen2-v1.0 (base) | 14.0% | 65.4% | 95.5% | 0.668 s |

- The Failure of Simple Pooling: The

flatten(mean pooling) andmax_poolingstrategies fail to improve upon the baseline. This is because their aggressive compression destroys the essential localization signal. The resulting single vector represents the topic of the page, not the specific needle on it. - The Failure of

flatten and multivector rerank: This hybrid strategy is the worst-performing of all. The reason is a fundamental flaw in its design for this task: the first stage uses a simple pooled vector to retrieve candidates. Since this pooling eliminates the localization signal, the initial candidate set is effectively random. hierarchical token pooling: By clustering and pooling tokens at indexing time, it reduces the number of vectors per page (in our case, by a factor of 4). This intelligently compresses the data, while preserving enough token-level detail in multivector setting. It achieves a Hit@20 of 91.6%, only slightly behind thebasestrategy’s 95.5%, but is significantly faster.basemultivector Search: The vanilla, un-optimizedbasemultivector search remains the most accurate strategy. Preserving every token vector provides the highest guarantee of finding the needle, but this comes at the highest computational cost.

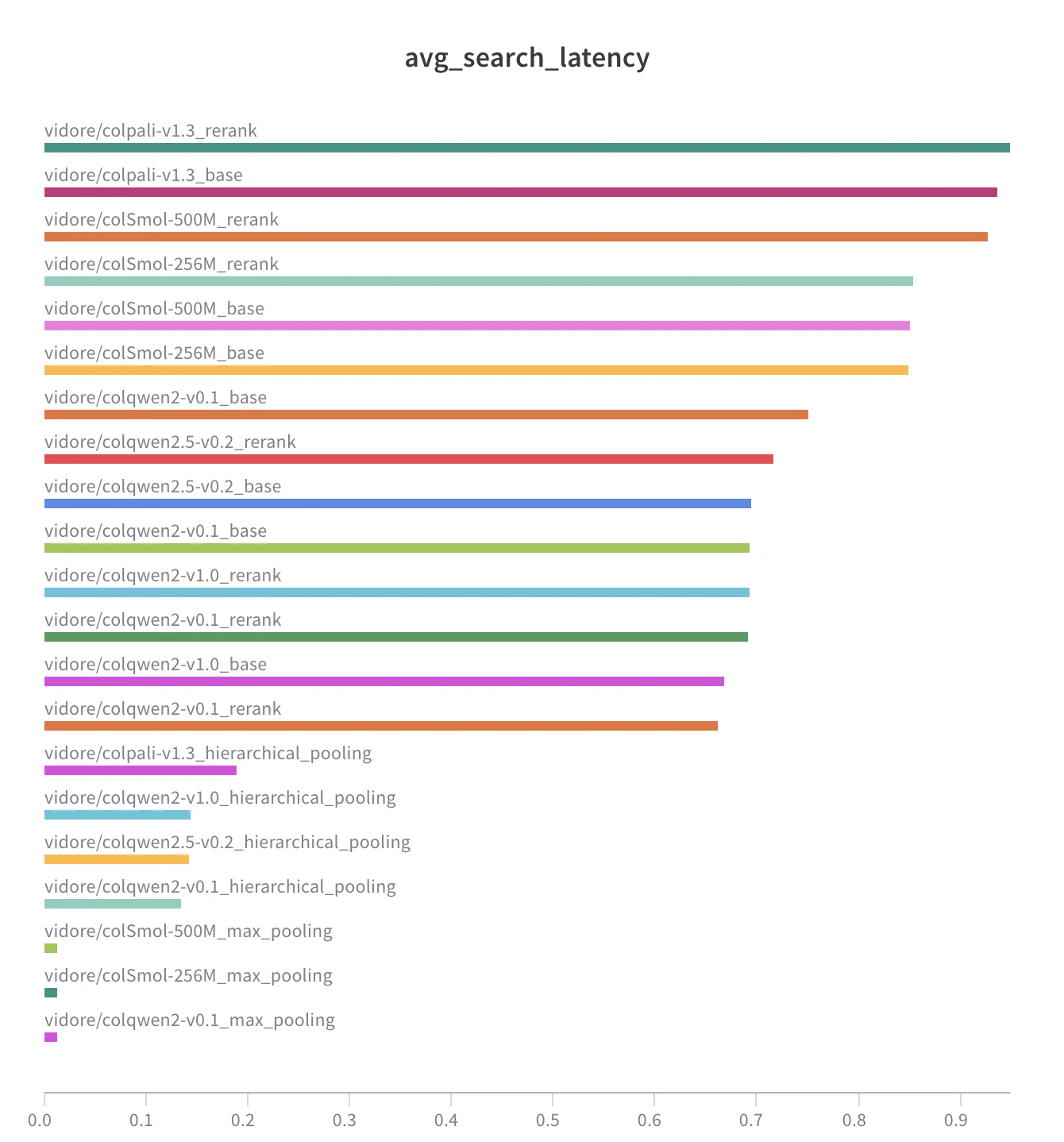

Latency:

Strategy (on vidore/colqwen2-v1.0) | Avg. Search Latency (s) | Hit@20 Accuracy |

|---|---|---|

flatten (Fast but Ineffective) | 0.010 s | 11.9% |

flatten and multivector rerank (Slower and Ineffective) | 0.692 s | 7.3% |

hierarchical token pooling (Accurate & Fast) | 0.144 s | 91.6% |

base (Most Accurate) | 0.668 s | 95.5% |

| Latency reported is as seen on NVIDIA H100 GPUs |

Practical Considerations

The accuracy ofbase multivector search is impressive, but its computational intensity has historically limited its use. hierarchical token pooling as a viable strategy creates a new, practical sweet spot on the accuracy-latency curve, making high-precision search accessible for a wider range of applications.

Search Latency and Computational Complexity

As the benchmark data shows, the search latency forbase multivector search is orders of magnitude higher than for single-vector (or pooled-vector) search. It’s important to note that the reported ~670ms latency is an average from per-document evaluations. In this benchmark, each of the 25 documents is processed independently. All pages from a single document’s variants (ranging from 5 to 200 pages) are ingested into a temporary table, resulting in a table size of approximately 1,230 rows (pages) per evaluation. The search is performed on this table, and then the table is discarded. This highlights a significant performance cost even on a relatively small, per-document scale. This stems from a fundamental difference in computational complexity:

- Modern ANN Search (for single vectors): Algorithms like HNSW (Hierarchical Navigable Small World) provide sub-linear search times, often close to

O(log N), whereNis the number of items in the index. This allows them to scale to billions of vectors with millisecond-level latency. - Late-Interaction Search (Multi-vector): The search process is far more intensive. For each query, it must compute similarity scores between query tokens and the tokens of many candidate documents. The complexity is closer to

O(M * Q * D), whereMis the number of candidate documents to score,Qis the number of query tokens, andDis the average number of tokens per document.Hierarchical token poolingdirectly attacks this problem by reducingD, leading to a significant reduction in search latency.

When to Use Multi-Vector Search

Given these constraints, the choice of strategy depends on the specific requirements of the application.- For Maximum Precision (

base): In domains where the cost of missing the needle is extremely high, the fullbasesearch is the most reliable option. - For a Balance of Precision and Performance (

hierarchical token pooling): This is the ideal choice for many applications. It makes high-precision search practical for larger datasets and more interactive use cases where the sub-second latency of thebasesearch may be too high. It significantly lowers the barrier to entry for adopting multivector search. It should still not be seen as a drop-in replacement for ANN, as it still requires more computational resources than single-vector search. - For General-Purpose Document Retrieval (

flatten/ single-vector): For large-scale retrieval where understanding the “gist” is sufficient or where in cases where large-context text-based models suffice, single-vector search remains the most practical and scalable solution.